Trainspotting: a window into addiction and recovery

April 24, 2012

Along with my colleague, epigeneticist Rob Martienssen, I spoke about mechanisms of addiction in the context of the movie, “Trainspotting” last week at the Cinema Arts Center in Huntington. A link about our event explains more about the “Science on screen” series that the cinema is hosting.

Among the audience participants were people who were recovering addicts themselves, or family members of people whose lives are colored by addiction. We talked about the changes in the brain that take place during drug use and addiction, and also about variability in the degree to which people are susceptible to addiction. Many of these topics dovetailed with scenes in the movie. For example, when Renton, the protagonist in “Trainspotting”, tries to quit using heroine, the withdrawal symptoms are much more severe for him compared to his friend, Simon. Rob speculated about whether epigenetic changes might explain why recovery from addiction is so challenging.

The lab’s first paper came out yesterday in the Journal of Neuroscience and was described rather amusingly in Science News Daily . In the paper, we test whether decisions about dynamic, time-varying stimuli benefit from multisensory inputs. In short, we find that such decisions do benefit from multisensory input, in the same way that decisions about static stimuli have been shown to do. And there’s a catch: the auditory and visual stimuli that we use don’t need to be played synchronously. Even two streams of asynchronous clicks and flashes are more informative than either stream alone. To us, this argues strongly that the auditory and visual streams are integrated separately, and then a final decision is made about the combined estimates.

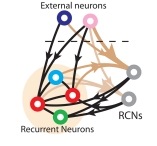

I started thinking about randomly connected networks during a recent visit to the Center for Theoretical Neuroscience where I was a seminar speaker last week. The idea behind these networks, which have been championed recently by Larry Abbott, Stefano Fusi and others, is that neurons in some parts of the brain receive inputs from neurons with a broad array of response properties (see figure, below). This means that, at least from the point of view of an experimenter recording from such neurons, their responses seem at first to be quite confusing. In one paper from Stefano Fusi’s group, they describe prefrontal neurons recorded during a task where monkeys were deciding among a number of different cues, according to a rule that could change over the course of the session. The neurons were recorded in Earl Miller‘s lab at MIT. Each neuron appeared to reflect a complicated combination of the two sensory cues, and the particular context in which they were presented. The paper argues that responses like these are expected in randomly connected networks, and indeed could subserve the very flexibility that is required by tasks that require dynamic combinations on sensory inputs and rules. Might this have implications for multisensory research? We don’t yet know, but like the Miller Lab, we present animals with a complex set of stimuli that vary along several dimensions. A randomly connected network might be the most efficient way for the animals to harvest the relevant information.

An interactive poster presentation

November 21, 2011

My two students, John Sheppard and David Raposo, presented their work at a Cold Spring Harbor poster session tonight. Our poster was a bit unusual: we wanted to communicate to our colleagues how we study decision-making and so we brought a demo version of our laboratory task to the poster session and challenged poster attendees to see how they measured up against our best subjects. We had many takers: about 40 people attempted the task, and we streamed the results from our data collection computer to an iphone, attached to the poster, which displayed them. Top marks go to Glenn Turner, Santiago Jaramillo and Josh Dubnau. Only one of the famed musicians from Greg Hannon’s lab was up for the challenge, and he was a top scorer as well, lending some credence to the idea that being a musician might confer some advantage on our task.

A visit to China for computational neuroscience

September 7, 2011

This summer, I was an instructor a the Computational and cognitive neurobiology course in Suzhou, China. This was my first visit to mainland China and I really enjoyed both the science and the tourism. One of the highlights of the course was a series of lectures from Upi Bhalla who described his recent work on olfaction. An interesting finding he described is that rats combine olfactory inputs from two nostrils to smell “in stereo”. This allows them to be extremely efficient in following complex odor trails.

This summer, I was an instructor a the Computational and cognitive neurobiology course in Suzhou, China. This was my first visit to mainland China and I really enjoyed both the science and the tourism. One of the highlights of the course was a series of lectures from Upi Bhalla who described his recent work on olfaction. An interesting finding he described is that rats combine olfactory inputs from two nostrils to smell “in stereo”. This allows them to be extremely efficient in following complex odor trails.

Another lecture I enjoyed was by Michael Hausser who described his experiments that explore the effects of injecting small amounts of noise into a network of neurons. He reports that small amounts of noise can have a major impact on the response of the circuit and concludes from this observation that any neural code worth its weight in salt must be robust to these kinds of perturbations.

I gave two lectures: one on the sources and implications of neural variability, and a second one on decision-making.

Synesthesia Journal Club & Wine tasting

May 26, 2011

Today for our journal club, Haley presented a paper by Melissa Saenz & Christoph Koch: “The sound of change: visually-induced auditory synesthesia“. This paper was very novel in that it is the first to explore the phenomenon of synesthesia for vision and audition. This was of particular interest to us since we study multisensory integration using auditory and visual stimuli. The paper was an important heads-up for us: the synesthetes in the study performed far, far better than controls on a single-sensory task, presumably because they experienced multisensory benefits that the control subjects didn’t. Our subjects might likewise benefit from such an advantage so thinking about how this plays into our results could be potentially important.

We followed the journal club with a bordeaux-style tasting (French and California) to see if anyone had any kind of taste-related synesthesia. No one did, but we all enjoyed the wine nonetheless.

A new paper in Nature Neuroscience (about which I wrote a News & Views) is quite intriguing. The paper uses off-the-shelf mechanisms for neural computations that are well-established in primary visual cortex and applies them to new findings in multisensory processing. The idea is that neural responses can be described by both the feedforward inputs and also a normalizing response that includes a large pool of broadly-tuned neurons. The paper is about visual and vestibular interactions, but I hope we can use this kind of approach to understand visual-auditory processing as well.

First spikes recorded!

May 17, 2011

We are pleased to have recorded spikes from our first cortical neurons last week. We owe a lot to Santiago Jaramillo and also Petr Znamenski who helped us with all the technical hurdles. All the lab members worked together on this one and we were really pleased to see that all 8 tetrodes are working.

Watch this space to hear about what we discover! Our goal is to understand how these neurons reflect the integration of multisensory evidence during a decision-making tasks. Our subjects are experts at the task and we want to understand the neural circuitry that underlies their behavior.

A “reunion” of our in-house colloquium on pathways and representations for vision and audition

May 5, 2011

As described earlier in the blog, we have been having a mini colloquium series here at Cold Spring Harbor the has focussed on sensory pathways in the cortex. This series has allowed us to make some interesting comparisons between audition and vision, but has left us at a loss in terms of figuring out how much information is actually carried in those pathways. To delve into these issues, we brought in a theorist, Jonathan Pillow from UT Austin who will spoke about his work on this topic.

As with the other talks in the series, this seminar was unbelievably well attended. Jonathan talked a bit about the history of Bayesian inference, and then described several recent lines of research from his laboratory. One topic which generated a lot of interest is a new model of decision-making in LIP that he is working on in conjunction with Alex Huk. The model puts forth that LIP neurons reflect not only sensory evidence for an against a decision, but also reflect visual inputs unrelated to the decision, trial history, and saccadic activity. The idea that these task attributes drive LIP neurons is likely to be agreed upon by most physiologists who record there, but a model that explicitly incorporates them has perhaps been missing from the literature. We await to see whether these guys will build urgency into their model, too!

Addiction talk in Manhattan

April 14, 2011

We attended a reception in the Upper East Side this week where I gave a talk on addiction. The talk was entitled, “Addiction: insights about prevention and treatment from basic science.” In making connections between what we do and addiction, one of the most interesting outcomes was learning more about the cognitive deficits that take place in addicted brains. Addiction to certain substances causes changes in widespread cortical networks including the dorsolateral prefrontal cortex and the orbital frontal cortex. Some of the really interesting work in this area comes from Nora Volkow’s lab and Anto Bonci’s lab, both at NIDA.