Movements in monkeys & mice

September 14, 2022

September 15, 2022

Today’s paper, current on the preprint server, is, “Neural cognitive signals during spontaneous movements in the macaque.” It is co first-authored by Sébastien Tremblay and Camille Testard at the McGill University. The main take home message of the paper is that in primate prefrontal cortex, neurons are modulated by both task variables and uninstructed movements, and that this mixed selectivity does not hinder the ability to decode variables of interest.

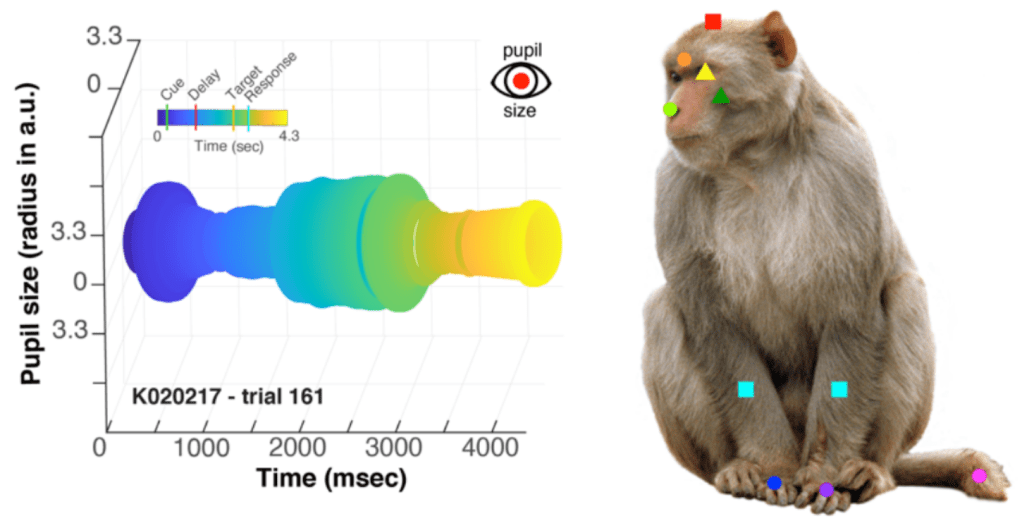

Approach: the authors measured neural activity from 4,414 neurons in Area 8 using Utah Arrays (chronically implanted electrodes). Animals were engaged in a visual association task in which they had to keep a cue in mind over a delay period while they waited for a target to instruct them about what to do. The authors took videos of the animals and labeled these using DeepLabCut so that they could examine the collective influence on neural activity of task variables, instructed movements (that they trained the animal to make) and uninstructed movements. They also measured pupil diameter. The data visualizations for both of these are quite nice! I mean look at the pupil!

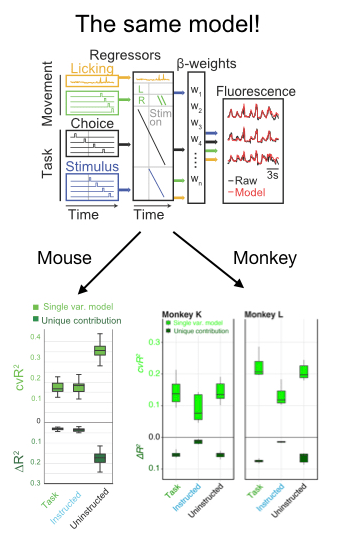

In addition to a number of nice analyses about the mixed selectivity of neurons for parameters like the location and identity of the target, the authors deployed an encoding model to compare the relative influence on neural activity of a number of parameters. Fortuitously, they took advantage of existing code in my lab developed by Simon Musall and Matt Kaufman for our 2019 paper. The reason this was so helpful is that it allows the reader to easily compare findings from 2 different experiments. This is really unusual in a paper!! We need more of this in the field! In this case it totally facilitated a cross-species comparison which is not usually very easy to do. They found, as we did, that uninstructed movements have a big impact on neural acetivity (left). These movements included the cheeks, nose, arms, pupil, and the raw motion energy from the video. For me, it was super cool to see the contribution of movements to single trial activity in such a different area and species. Importantly, they tested their ability to decode parameters of interest given the magnitude of movement-driven activity. They could! This is reminiscent of previous observations from Carsen Stringer, who argued that in mouse V1, movement driven activity is mostly orthogonal to stimulus-driven activity, preventing movements from contaminating V1’s representation of the stimulus.

Skeptic’s corner: This paper was really a pleasure to read and my main confusion came from the framing of the result in the discussion. The authors found that task variables accounted for a bit more single-trial variance in their experiment than ours. I agree with their assessment, which is that it is hard to know how to interpret this difference because the recordings in this paper are from an area with strong visually-driven activity by exactly the sorts of images they used. We instead measured activity cortex-wide. Given this methodological difference, I was a bit puzzled by the statement that, “Mice and primates diverged ~100 million years ago, such that findings from mice research sometimes do not translate to nonhuman primates.” I mean, that statement is for sure true, but this particular paper seemed instead like evidence for the opposite- it points to shared computations in diverse brains. Along the same lines, they say “Perhaps in nonhuman primates, association cortical areas evolved neural machinery independent from movement circuits, such that cognitive signals can be fully dissociated from the immediate sensorymotor environment.” I was again a bit confused because the take home of the paper seemed to be that movements modulated neural activity. The issue at hand here might be the extent to which the movement signals “sully” the waters: disrupt the ability of a downstream area to decode a task parameter of interest. Here, decoding is preserved even in the face of movement, which is cool. But again, I think the situation appears to be the same in mice and monkeys- the movements live in an orthogonal subspace so don’t get in the way of decoding. Maybe Carsen Stringer will weigh in here- I feel like this is exactly what her paper showed in mice, albeit using a different analysis.

A final nugget: Figure S9 shows a 95% prediction of the correct target based on facial movements alone and even before the animal knows where the target will appear on the screen. Wow!

Thanks to Matt Kaufman and Simon Musall for a fun discussion on this topic.